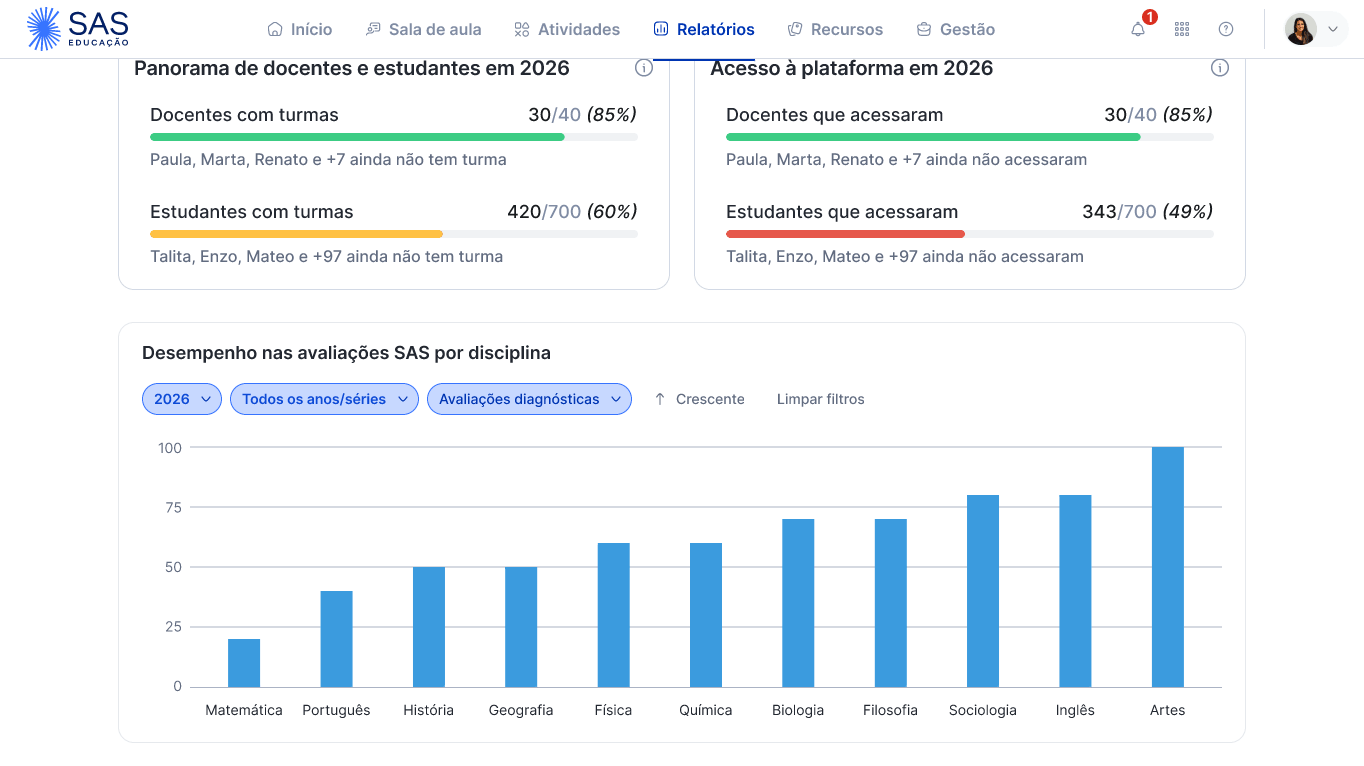

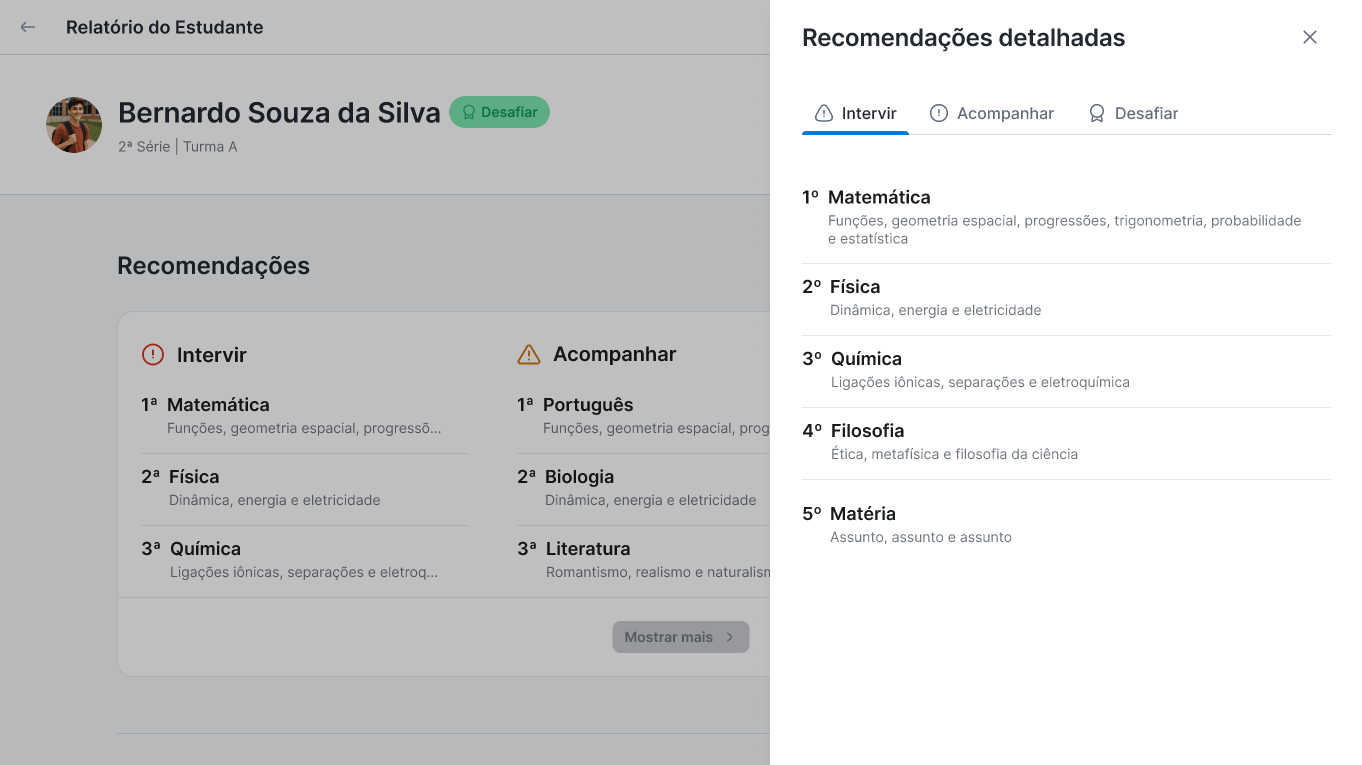

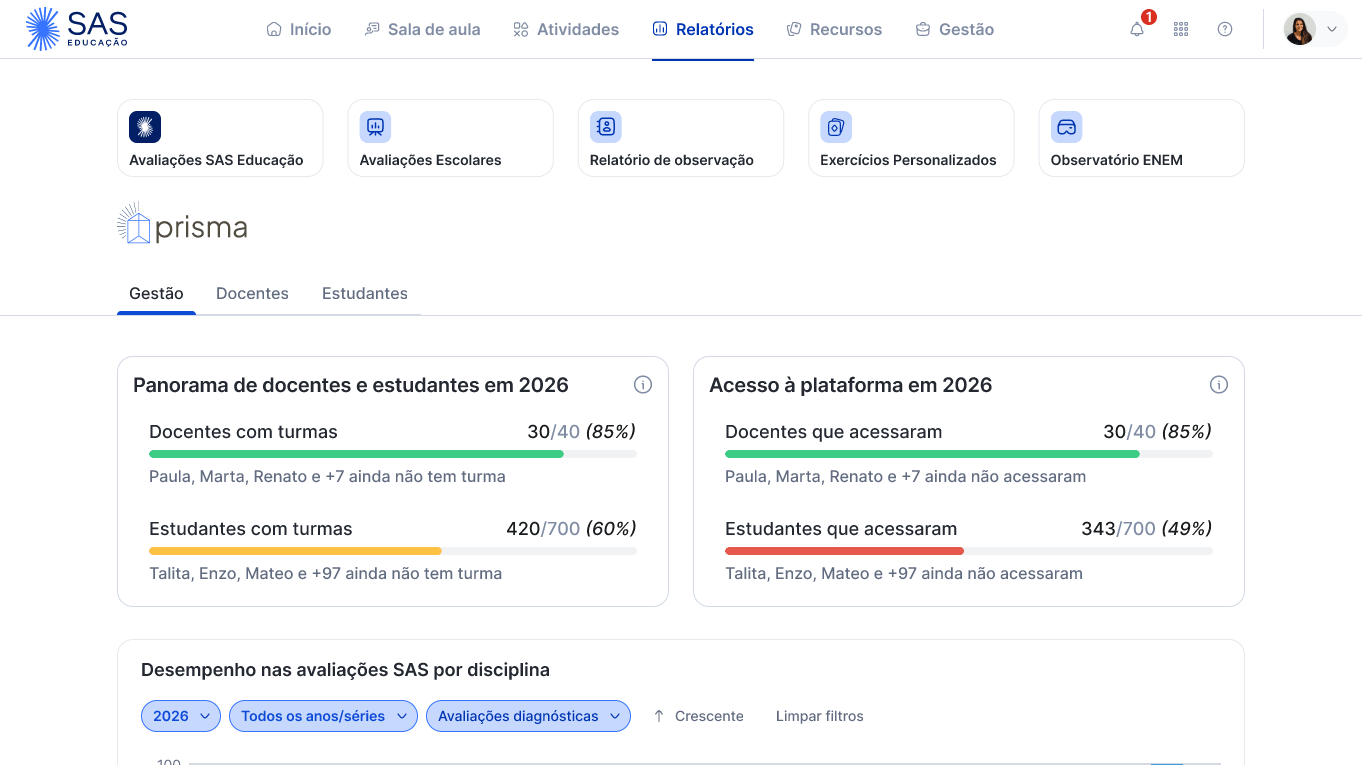

The goal was clear: consolidate every aspect of students' pedagogical routine on the platform into a single experience — homework, assessments, and study habits. A live dashboard that teachers, coordinators, and principals could consult daily to make pedagogical decisions with greater confidence.

Within assessments, there was a particularly valuable front: external assessments — practice tests replicating the Enem format — which would give principals a comparative view across schools, coordinators a subject-by-subject map, and students a clear picture of where to improve.

The deadline was November, so the product would be live at the start of the 2026 school year.

The initial vision

Product in delivery mode

When I joined as design lead in June 2025, the team had already conducted several research rounds and built a vision that appeared to be aligned with stakeholders. The work seemed to be in delivery mode — not discovery.

The conflict uncovered

Data that didn't speak to each other

Terms like "performance" and "participation" had different definitions depending on the context. When we tried to consolidate everything into a single view, the data didn't connect. What had been designed previously would not deliver a coherent product.

The public expectation

Presented at Bett Educar

The product had been presented at Bett Educar, the largest education event in Latin America. Schools and principals were excited. The expectation was real — and public. Students, teachers, coordinators, and principals each expected something different.